Signify

In our current hybrid world, communicating on video calls has become the norm. But for a significant number of people, communication is forced to stay limited to chats and text boxes. Signify enables people with hearing difficulties to communicate naturally on video calling platforms.

DURATION

5 weeks

TEAM

Danica Martins

Ranveer Singh

ROLE

User research

UX Design

Code Experimentation

TOOLS

Figma

Procreate

Adobe Photoshop

Adobe Illustrator

200M

people use video calling platforms

430M

people have disabling hearing loss

THE PROBLEM

In our current remote & hybrid world, communicating on video calls has become the norm. But for a significant number of people, communication is forced to stay limited to chats and text boxes.

To address this, we wanted to imagine a feature that could be potentially integrated into existing applications for a seamless experience.

THE DESIGN

Signify

A concept for a gesture recognition-based extension that enables sign language use on video calling platforms. (Conceptualized for Microsoft Teams)

THE DESIGN

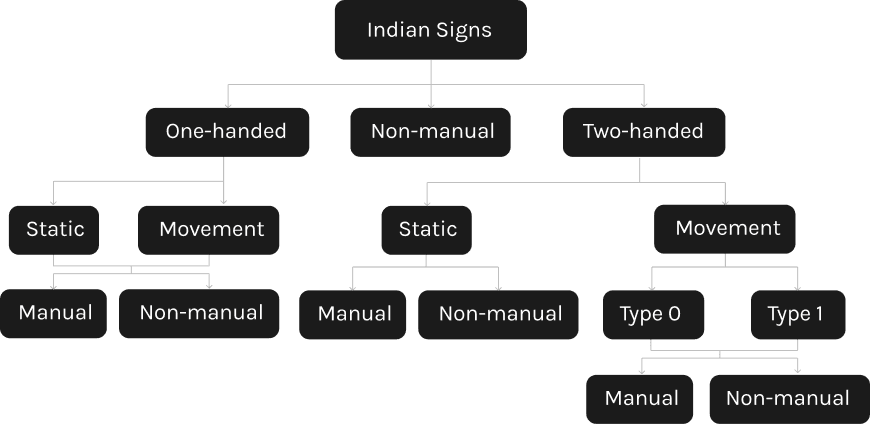

How does Indian Sign Language (ISL) work?

Understanding the scope of the ISL was essential to get a sense of what we were tackling. The language is broken down into - plain alphabet, words (single-handed, double-handed), static and movement-oriented signs, and a lot more.

For the prototype, we decided to code for specific words - Hello, Yes, No, Thank you & I love you.

User Persona

FINDING THE GAP

The current experience

Despite interpretation features being present on a few select platforms like Zoom, it still requires an intermediary (interpreter) to facilitate the communication.

There were no interventions that were integrated which allowed users to directly communicate on the call in their most natural or preferred manner.

The Gap

Independent communication

Features enabling self-sufficient communication without reliance on other participants or interpreters at all times.

Voice Output

Feature that accommodates the communication preferences of most call participants.

STORYBOARDING

How will Signify impact the experience?

PROTOTYPING

Experimenting with ML

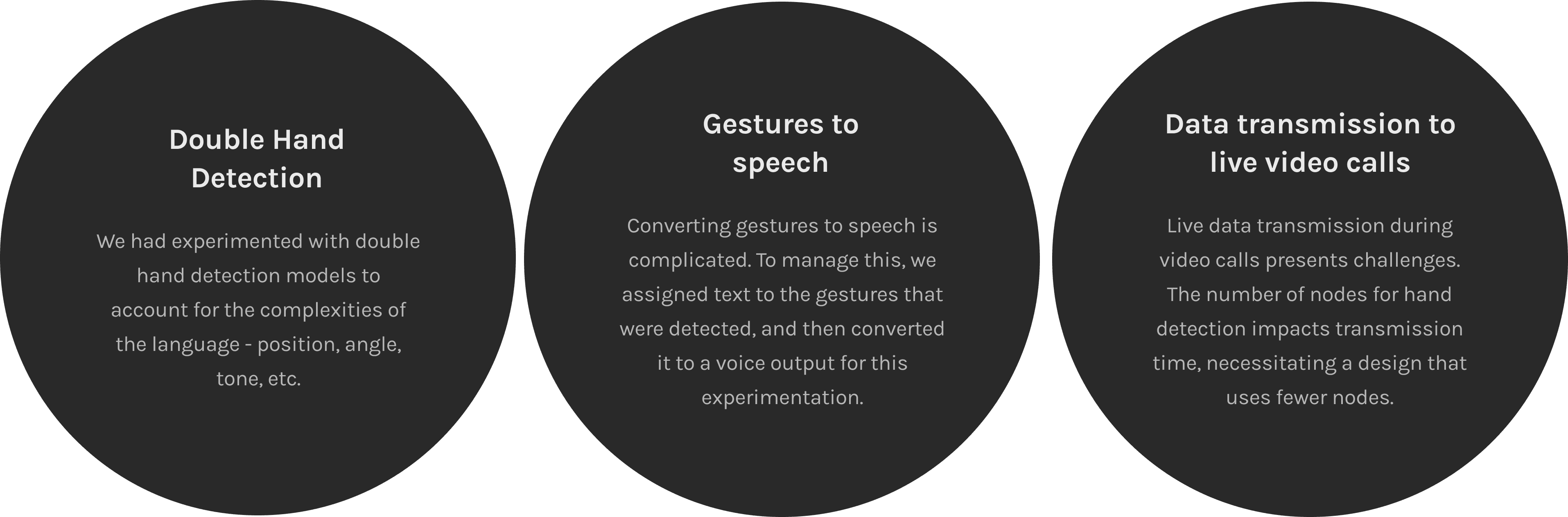

We followed tutorials to experiment with gesture recognition

(Tensorflow.JS + React.JS + Fingerpose and MediaPipe + Python).

Hand Detection Model

This model detects single hand gestures and outputs their symbols/emoticons.

Double Hand Detection Model

This model detects double hand signs and outputs text of their respective signs.

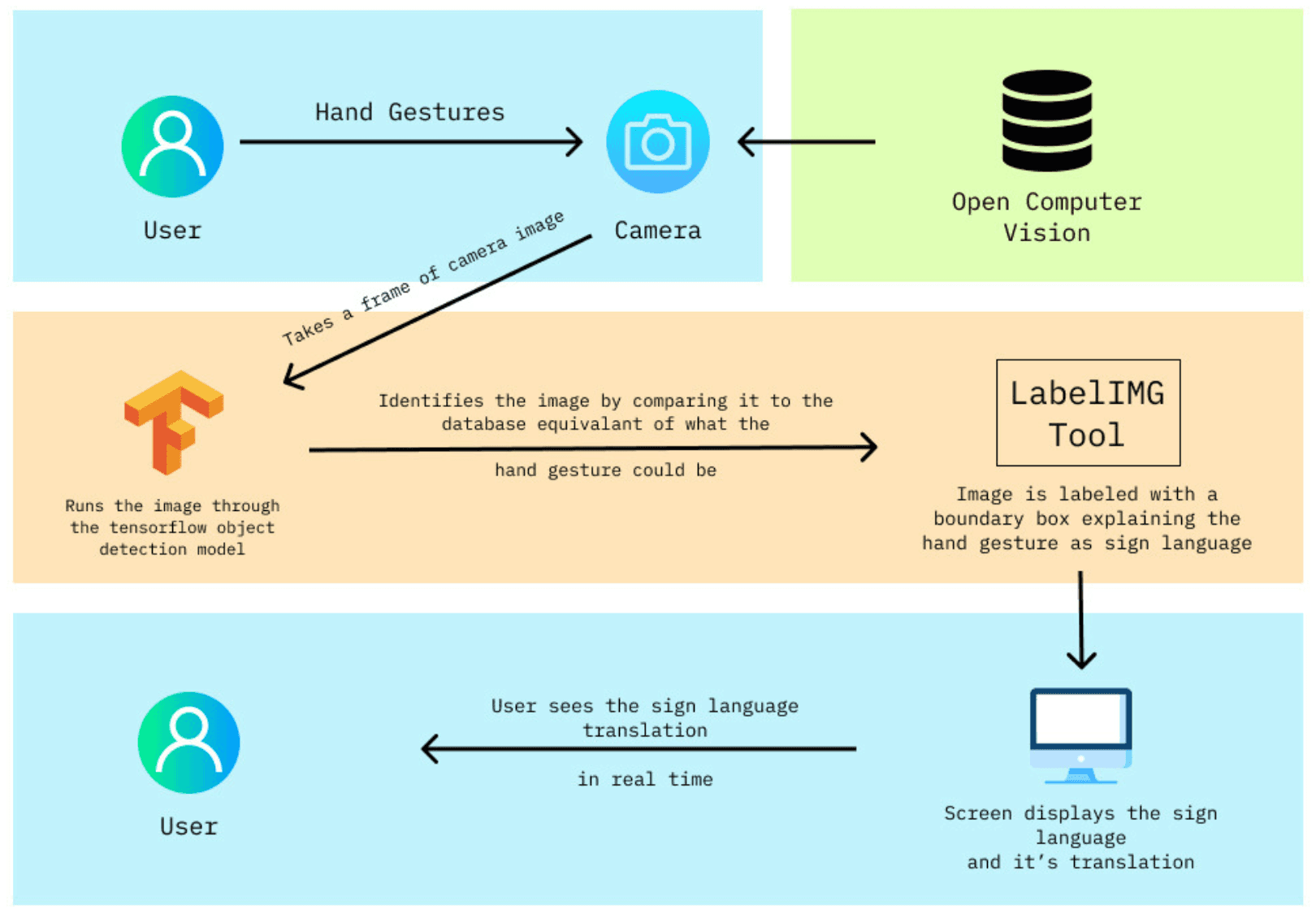

System Architecture

To assess feasibility, we mapped out the technical ecosystem behind this concept — identifying the key technologies, software systems, and how they connect. This system architecture diagram reflects a possible implementation approach that would be further refined in collaboration with developers and technical experts.

Project Scope

Given that the project’s timeline, we needed to prioritize what we could achieve. This plugin aims to be more of a concept that demonstrates what could potentially be done.

Prototyping the screens